Cloud Application Architectures

Infrastructure cloud services offer very powerful capabilities to utilize infrastructure resources in a very dynamic model. Instead of having minimum usage periods of month or even years the usage can be limited to only a couple of hours. These capabilities allows an application to dynamically adjust the infrastructure usage to the current requirement, but it also requires the application to be compliant to some cloud architecture principles. In this post we will briefly describe the different application architectures and also discuss the up- and downsides.

Basic requirements

In addition to the application architecture compliance also the some requirements must be met by the application in order to make the use of an infrastructure cloud as the basic infrastructure useful. From the following requirements at least one criteria must be met by the cloud application: dynamic scalability, broad network access, high failover requirements. If none of these requirements for the application in question is given the infrastructure cloud is not an appropriate runtime environment.

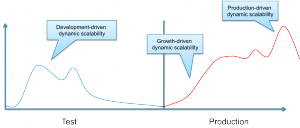

The requirement to dynamically scale an application can be driven from different sources. Many applications require different amounts of infrastructure due to the business logic (e.g. in the case of scientific calculations) or the user interaction (e.g. in the case of web-applications).

Furthermore along the entire lifecycle of an application the requirement for dynamic ressources is triggered differently as depicted in the figure above.

Broad network access is often required for applications that are accessed by a huge number of users from different networks. In this case the positioning of the application in the cloud makes the access simpler to realize and more secure.

Finally the cloud can help to create a very high availability. When using deploying single applications with multiple tiers across a set of decoupled datacenters the availability of the application can be raised to a higher level, as the combined risk (of both datacenters failing at the same time) is the multiplication of the individual risks.

Architectural Principles

After having reviewed the basic requirements for an application to be usefully deployed within the cloud this section reviews principles of application architectures that can help an application to make use of the cloud capabilities.

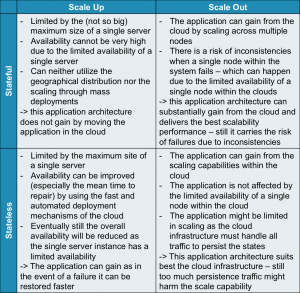

There are two main aspects: state management and scalability. The following table shows how these principles

Stateful vs. Stateless:

Stateful systems have substantial information on the current state of the application in the memory, or in a cache that cannot be recovered when the system is being restarted. Stateless systems in turn are keeping as little data as possible within a non-recoverable repository. Usually stateless applications are persisting all of their data instantly and keep very little information “in-flight”. Stateless systems have the huge advantage that recovery processes are much simpler. When a stateless system needs to be restarted after an uncontrolled stop it can simply restore the state from the data repository. If the system is developed in a very clean stateless way there is even no such thing like a recovery process as every operation starts without an initial state and thus takes the input values from the data repository. Therefore stateless systems have less rigid requirements for the underlying infrastructure.

Vertical vs. Horizontal

Application design can also fundamentally differ in the way scalability is designed. An application can be either scaling up or scaling out. Scaling up means increasing the performance of a single operating system to support the higher demands of the application. Scaling out means adding additional operating system and application instances for instance in a cluster and the application will coordinate the load distribution. Typical examples of scale out architectures are webserver clusters that serve a single website.

The two scaling principles are depicted in the figure below.

Both scalability designs have advantages and disadvantages. A short comparison can be found in the table below.

| Scale Up | Scale Out |

|---|---|

|

|

When looking the two different aspects (state management and scalability) in a combined way many applications that scale out are following the principles of statelessness, whereas many scale-up applications contain much more state within the application.

The application architecture for the cloud

How to derive from the requirements and the architectural principles a design decision for the cloud? First the physical design of the infrastructure clouds has to be reviewed briefly.

Infrastructure clouds are often build using standard sized X86 servers, each server neither being very powerful nor having a particular high availability. The power of the cloud comes from the huge mass of similar servers that are combined in a single cloud. This cloud can be within one datacenter or even across multiple locations. The later setup can often be found when the size of the cloud makes it difficult to install all servers and storage pools within one datacenter.

The following table shows how the principles match the infrastructure setup and how they support the requirements.

So in conclusion for the application architecture the cloud requires a scale out architecture. The state management of the application depends on a trade-off between the consistency requirement and the scalability requirement. Many cloud applications follow a partially stateless model - with the important data being persisted instantly whereas other data elements are not persisted and will be lost in the case of a failure of a single node.